Machine learning

To conclude our foray into software and software-related topics, we will go over machine learning. Machine learning is essential to the modern world and finds many applications in the sciences (we will go over examples in later chapters), but it can be quite difficult to understand. Its formidability often comes from its heavily developer-oriented nature, the difficulties of preparing and working with datasets (which often are incomplete and in a format unsuitable for machine learning), and the complexity of tuning hyperparameters — that is, configuration variables — to get a neural network to fit a dataset. Machine learning is both an art and a science, and a finicky one at that. But it is also, for better or worse, a powerful technology. And for any data-heavy field, it is an invaluable tool.

Fundamentals of machine learning¶

Machine learning is the process of using a computer to automatically create a mathematical model. The main difference between machine learning and other modeling techniques is that machine learning doesn’t give a computer an algorithm for a specific task, but instead helps the computer develop an algorithm on its own. Machine learning models have proven to be massively successful for a variety of tasks, including classification, object detection, and machine translation, among many others.

The simplest type of machine learning uses a model called a multi-layer perceptron (MLP). This type of model is based on two mathematical operations, matrix-vector multiplication and vector addition. It takes in an input variable (for instance, size, position, color, etc.) encoded as a variable , then performs the operation . The matrix is known as the weights, and the vector is known as the biases.

In machine learning, we divide a dataset into two parts: the inputs (also called the features), and the outputs (also called the labels). For instance, if we were to build a model to predict whether an image is a cat or a dog, then the features would be a list of images and the labels would be a list of ["cat", "cat", "dog", "dog", ...] that corresponds to the correct animal for each image. When the model outputs a prediction for some given input vector (for instance, a vector of image pixels), we compare it to the real value in the dataset .

The mathematical way to represent this comparison is the loss function, which measures the error in the model’s predictions. The loss function compares each element of the predicted outputs with their real values in the dataset and squares the difference, by the following equation:

The model “learns” by minimizing the loss function, until the model reaches a minimum in the error of its predictions. This is accomplished by the process of gradient descent, which comes from multivariable calculus. Recall that the gradient points in the direction of steepest ascent, meaning that the negative of the gradient points in the direction of steepest descent. Therefore, we calculate the gradient for the loss function, and adjust the weights and biases based on the gradient, as given by the following equation:

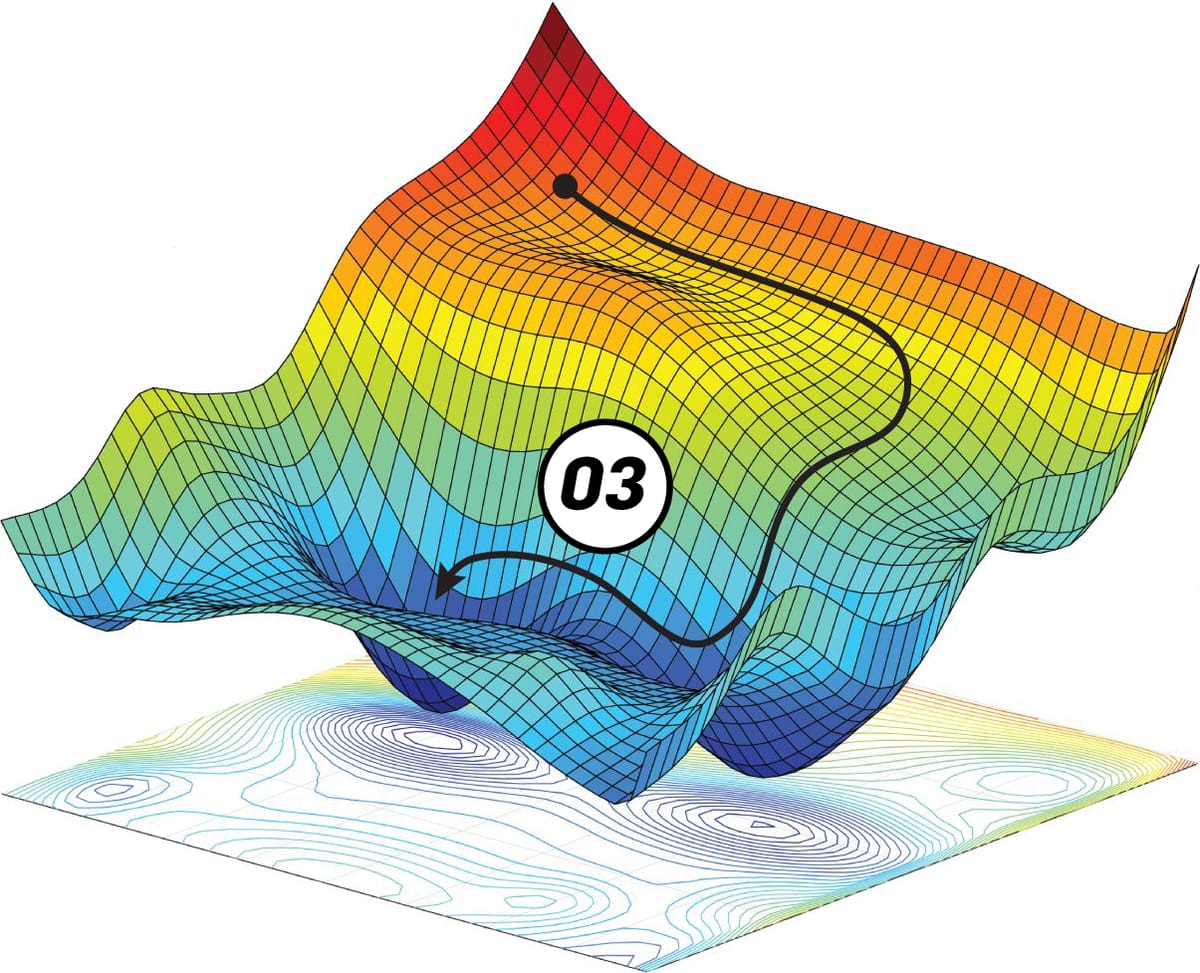

If we think of the loss function as a surface , then the gradient descent procedure is equivalent to finding a path to the minimum of the surface, as we show in Figure 1.

Figure 1:Gradient descent uses the gradient of a function to figure out which direction to go to iteratively approach its minima. Image credit: Paperspace

We may repeat as many iterations of gradient descent until we are satisfied with the model’s performance (that is, the error of the model is low enough for our purposes). The model has now “learned” from the dataset, and it can make accurate predictions even on data that is not in its dataset.

However, while the MLP model is relatively simple to understand (compared to other model types), it is harder to train and use compared to other, more mathematically-sophisticated machine-learning models, such as Kernel Ridge Regression (KRR), Extra Trees Regression (ETR), Random Forest Regression (RFR), and Support Vector Regression (SVR). There are also other types of neural networks, like Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), Transformers, and even others. Here, we will not strive to explain every type of machine-learning model, but simply explain the broad concepts and show how you can use machine learning in practice.

Coding the model¶

As the details of machine learning are quite complicated, it is convenient to use libraries that already implement many of the functions and classes for making machine learning models.